Why I do not lose (much) sleep over Generative Artificial Intelligence.

The only thing I lose sleep over in this latest round of hype over generative artificial intelligence are the people in board rooms who think these tools are intelligent. They are undeniably useful, a ton of fun to play with, and wildly popular. We share some obvious stock picks to benefit and some views on judging intelligence.

Let us get the easy part over with.

You would have to be born under a rock not to have clocked the huge global interest in generative artificial intelligence following the launch of ChatGPT, Midjourney and other tools.

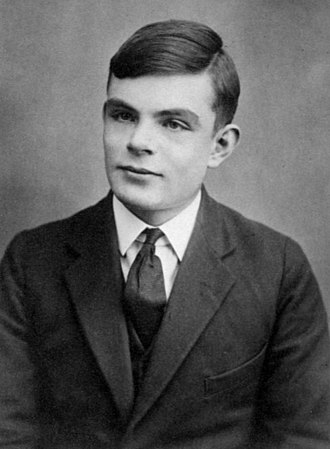

The only thing I want to do is share some of the obvious stock picks, a few of my own fun experiments (nothing new there) and a quick callout to the insight of one of my favorite thinkers in artificial intelligence: the late and great Alan Turing.

The reason I want to mention Alan Turing is two-fold:

- He was extremely creative and intelligent but persecuted in his own time for being different.

- He came up with an extremely simple test any board room can use to evaluate ChatGPT.

I won't dwell on the first item. It is a shabby story in the history of the Establishment in Great Britain, at the time Alan Turing was alive. You can read about it on wiki.

It is the second item which is noteworthy for this article. It is a simple variation of the classical Turing Test that was invented by Alan Turing as a simple and commonsense methodology to arrive at an answer to the question:

Is a candidate AI intelligent enough to fool a human?

We will get to that later, but I want to share a very simple Turing Game that any able group of school students could play, with ChatGPT, to establish their own value to society.

Rest assured, you are probably doing it already, and it is no great insight on my part.

However, this is an investment forum, and so it is worth sharing.

While I am confident that most school children would win the Turing Game very easily, I am not so sure about typical members of the corporate board room.

They are so accustomed to place high value on form and style over substance, that they might easily fail the test. It is for such people that I am keen to spell out the test, such as it is.

Stock Selections

Generative artificial intelligence models are one of the wider family of neural network style models that have exploded to prominence in the last fifteen years. Like all such models, they are not programmed explicitly, but learn from exposure to a vast amount of curated data.

There are many different types of such models, and most readers are probably familiar with surveys and explainers that go into detail. For my purposes, only two things really matter:

- Generative models of the type called Large Language Models learn from huge datasets.

- The models are largely unsupervised, meaning they learn the structures inherent in the data.

In simple terms, for they are not simple models, the Large Language Models are learning the joint likelihood that one or other word will appear in the context of other words.

This is more or less how sentence completion tools work, like the one I am using now.

The main takeaway is this:

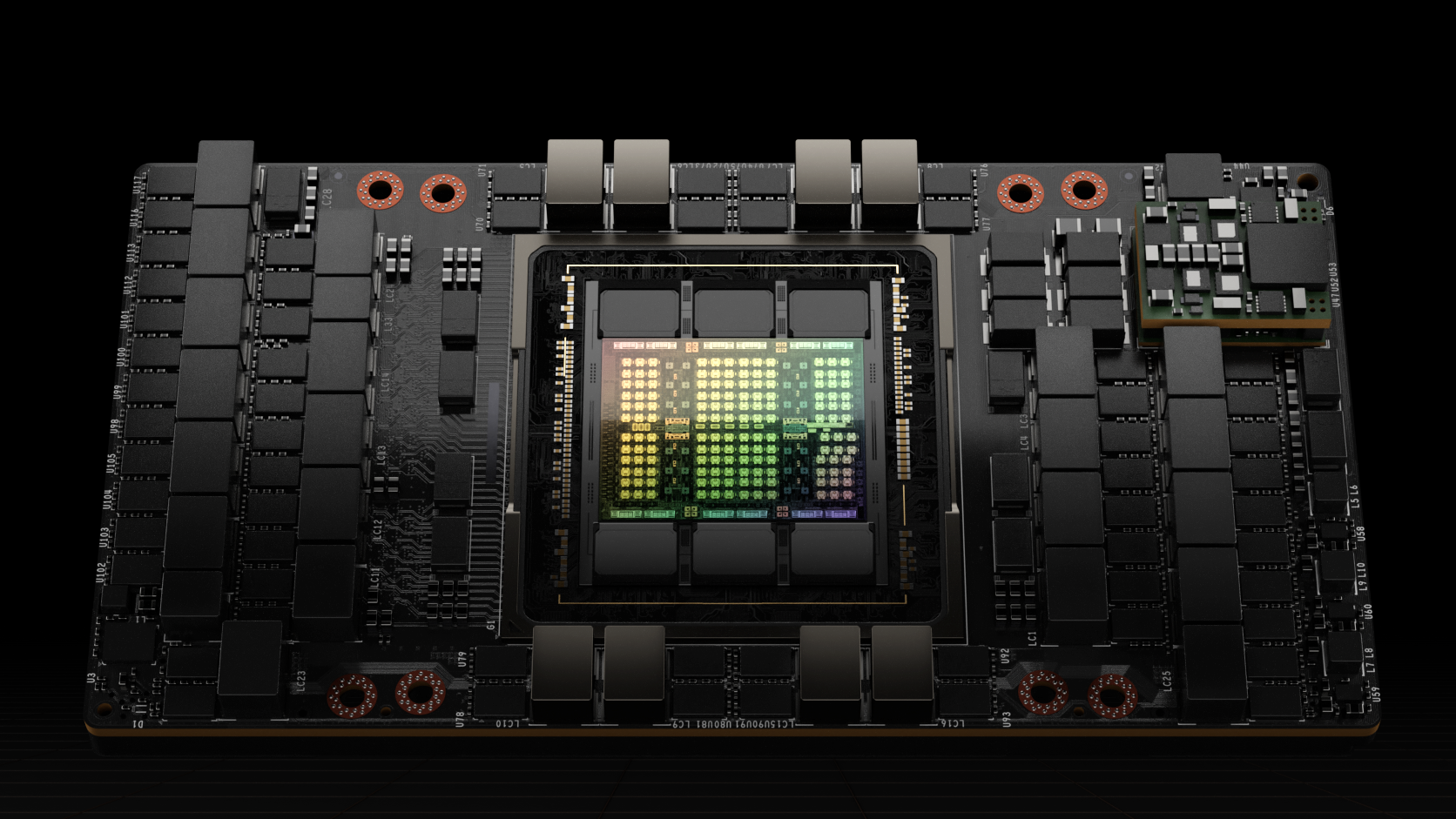

It takes great gobs of data, very many high-performance Graphics Processing Units (GPU), and a dedicated cloud computing center with a very fast networking backbone to train such models.

You can read that in many places, and in the spirit of "picks and shovels" investing the place to invest in listed companies is pretty clear: the high-performance computing and cloud plays.

The three majors:

Nvidia NVDA

Microsoft MSFT

Alphabet GOOG

are the main ones of interest to me.

Microsoft is an obvious choice, given their large Azure cloud offering, investment in ChatGPT creator, OpenAI, and their collaboration with Nvidia on AI supercomputers in the cloud. Nvidia is the clear market leader in hardware. The reason is that the kind of dense matrix arithmetic that is employed in the latest round of models is an ideal fit for the NVIDIA high-performance computing architecture. It is also a very good fit for Google's home-grown Tensor Processing Unit (TPU) architecture. We expect Google to soon release a similar AI from their DeepMind subsidiary, the people who built the AlphaGo and AlphaFold systems.

Of course, you will read a lot about money going into early-stage start-ups, like OpenAI, but I am assuming you can only invest in listed companies. The logical way to play the next phase of the artificial intelligence boom is via high performance computing.

You can read about the NVIDIA Hopper Architecture here. It is named after Rear Admiral Grace Hopper, a formidable intellect who played a key role in the development of compilers.

You can think of compilers as some of the first high-level language models for translating code that is easy for humans to read and understand into the machine language of the system.

One might think NVIDIA is the only game in town, but that is not true. The parent company of Google, Alphabet, controls a very powerful alternative called the Tensor Processing Unit (TPU). They actually got the jump on NVIDIA early, with the concept of a dedicated math unit that could do more than simply multiply two matrices. This one works with tensors.

Tensor is a word that sounds a lot cooler than matrix Indeed, Albert Einstein used them in his General Theory of Relativity a hundred plus years ago. However, the usage here is a bit more prosaic. The tensor is just an array of numbers that is "bigger" than square, like a 3D chessboard. TPUs deal with numbers arranged like that.

The hardware design for the TPU was pretty creative and drew heavily on the MIPS processor of earlier years. This was a numerical computation heavy processor whose design owed much to the needs of military signals processing in radar and sonar.

I bet you never thought that doing mathematics, like multiplying numbers out in cubic arrays, or higher, would be important to composing generative art like my cover photo. I made this using the image generator AI Midjourney. All I did was ask it for this image:

Happy robot family cavorting in a field of lithium with windmill.

That is just me getting ahead of the curve with a future facing ESG marketing campaign.

You have to admit, that little robot family is kind of cute, but I strayed off-piste there.

The above three stocks are figuring quite prominently now. However, the market is perhaps a little overheated at this point, due to the excess liquidity still in this market.

In my view, this boom will go on for some time, and there may be a "reality moment" that has yet to hit the broader stock market on the actual powers of generative artificial intelligence. A whole bunch of new stuff coming, such as the Graphcore IPU. For many years, venture firms really did not put much capital into semiconductors. The generative AI boom will fix that.

With this in mind, I will stop there, and simply draw your attention to other stocks like Meta META and Amazon AMZN, that have excellent credentials in artificial intelligence. This will likely evolve into a protracted ding-dong knockout fight between the tech giants.

Why Even Worry a Little Bit?

Since I am one of those people who never actually grew up, my mind turns always to the effect of such technologies on children. Will they have a future? Should they worry? In short:

What are the wider social implications of such powerful generative artificial intelligence tools? How does the future look for our children?

There are many implications, and I can't possibly imagine all of them, nor do them justice.

However, since I did train as a mathematical physicist, and I read the papers describing how these tools are built, and also played with them for a whole afternoon, I can suggest this:

I think it is pretty easy to tell that these tools are not actually intelligent.

Whew! Aside from the sacked employees of BuzzFeed, the rest of us are (mostly) safe.

However, since the world of work involves power relationships that trickle down from above, it may be a good idea to arm yourself with good arguments as to your intelligence, and not just wait to vent in the exit interview. There is a need for some plain talking in social discussion.

In What way is Generative Artificial Intelligence Smart (or not)?

The generative artificial intelligence tools that I have examined are all trained from truly giant databases of human generated content. This is scraped from the Internet, from repositories of computer code, like Github, code advice question and answer sites like StackOverflow, and a wide variety of machine-readable works of literature.

While it costs a lot to train the models, and a considerable amount to prepare the data set for use in machine learning, the data itself was ultimately generated by intelligent humans. You did not actually have to pay any of those people for the task of creating intelligent content.

This explains the commercial appeal.

If you can absorb great quantities of data from people who are intelligent and can work out good and effective ways to model and capture the embedded statistical patterns, then you may be able to produce a computer model that does a good job of imitating humans.

For many years, this idea did not progress very far. However, the slow and steady progress in computer hardware, combined with very large R&D budgets for AI from digital marketing, have helped the field leg its way up to the present (very high) standards of human imitation.

The availability of large, curated datasets has proven very important.

For instance, there is this book corpus from The Pile. It contains 36.8 GB of compressed text data which is the content of 196, 700 books, on various topics. I am not sure how anybody got the rights to distribute that many books for free, but there you go. That is start-up research. Forget how anybody got their hands on that many books. Nothing to see there.

I did some math, and worked out that if you had 80 reading years at your disposal you might have to read 7 books a day to work your way through that part of The Pile. Forget about the rest of The Pile. There are parts of that which are a very good portion of Github. This is a code repository and the download version of that is 100GB of compressed data.

Needless to say, it took a lot of smart humans a long time to write 196,700 books, and very many computer programmers to write the code in Github and answers on StackOverflow.

The business model of generative artificial intelligence is very simple and powerful.

Go build a very big supercomputer to read everything and try and make sense of it.

Evidently, whatever does come out, will be smarter than dumb.

The computer ought to know something about many things after reading that much!

How does Generative Artificial Intelligence fit the Patterns?

The generative artificial intelligence tools that I have examined are all trained from truly giant databases using a probability-based approach to modelling statistical patterns in text. This is not a simple "count all the words" thing. Nor is it the next best thing "count all the pairs of words", or even the triples, or most generally the n-tuples of n words in a row.

Google did that a long time ago. It works, up to a point, but is pretty useless for generating human like text in any great detail. Later researchers started experimenting with models that could embed some form of "memory" as they read, so that two words here could in some way be connected with three words, over there, in constructing a meaningful statement.

Still other researchers found that you could sometimes map words into a mathematical space of vectors, or tensors, so that you could do algebra on them. What I mean by that is that you could capture the meaning of the word Queen, as it related to the word King, as some sort of differential meaning. Subtract "Man" from King and add "Woman" to get Queen.

It sounds like science fiction, but it is a ruse that works, up to a fashion.

When you put such ideas together, along with a host of other genuinely innovative tricks, you get to the present state-of-the-art in fitting a giant probability distribution of text, and text sequences, with massive datasets of real human generated pattern data.

This tells the bot that "Python" code has different keywords than "C++" code.

It also tells the bot to expect similar length lines, maybe with rhyme, for poetry, and a beginning, middle and end narrative structure for stories and essays.

It also tells the bot that when people like me ask for auto-generated "Conspiracy Theories" we are probably a little bored on a Sunday morning, and desire light entertainment.

Some Simple Examples to Uncover (Un)Intelligence

Learning statistical patterns sounds like a crude way to mimic intelligence, and it surely is just that: crude. However, as the models demonstrate, it can be very effective when the model is very big, with loads of free parameters, is well structured, and is well trained. The interesting feature of the latest developments, in both text and image generation, is that these models learn such structures at both small and large scales.

To reframe this idea in simple terms, you can easily recall the struggle in writing student essays to pass from the high-level essay structure: the beginning, middle, and end, to the relevance of each paragraph and sentence to the essay topic, while maintaining the rhetorical thread.

Something similar happens when an artist frames out the structure of a work as a sketch.

There is the big picture, the small picture, and the totality - which is the Gestalt.

These models are really good at all steps, and especially good at the Gestalt.

However, when you play with them, perhaps more so the textual than the image versions, it becomes clear that the aesthetic expressed is often a little off. More likely than not, a query will contain factual errors, contradictions, or words used in a sense which is analogous to some meaning which is sensible, but not quite right in the sense of human meaning.

This is okay, by the way.

Most people who use these tools fully intend to edit the results.

This means that the joint work is properly a collaboration between human and machine.

Of course, that is obvious. In a chat environment, the net result is formed by the marriage of a human generated question, a machine generated answer, and a human critic in the edit phase. No sensible cheat on a student essay would hand in the raw result. This is not simply born of a desire to mask the origin of a piece of creative work. It is just plain commonsense.

To see what I mean, consider this query to ChatGPT:

Develop a Pop-Tart Conspiracy Theory on why Pop-Tarts always wind up burned on Sunday morning when the toaster is left in the hands of Ageing Boomer Grandpa who can't work his smartphone.

This generates the result:

The Pop-Tart Conspiracy Theory suggests that the burn rate of Pop-Tarts on Sunday mornings is a deliberate effort by the Pop-Tart Corporation to drive sales. The theory posits that the company has embedded a subliminal message in the packaging, instructing Grandpas to set the toaster to the highest setting, resulting in burnt Pop-Tarts. This, in turn, prompts frustration and a desire to purchase a new, high-tech toaster that won't burn the beloved breakfast treat. The conspiracy theory suggests that this is all part of a larger marketing strategy to drive sales of both Pop-Tarts and high-tech toasters.

That is pretty good, but I am sure a budding conspiracy theorist could clean it up some.

You get my drift. These things are a lot of fun.

If you are a student on campus, faced with the requirement to write an essay exploring the role of Critical Race Theory in the wider social context, you might well start with this query:

Write an essay in the spirit of Critical Rodent Theory on the role of the rabbit in propagating oppression of indigenous bandicoots.

You can see what I have done here. I have substituted some analogous words, in a juvenile manner, which suits my sense of humor, to see what happens. I got this:

Critical Rodent Theory is a framework that seeks to examine and understand the complex interplay between different species and their impact on each other. This theory recognizes the existence of power dynamics between species and argues that certain species are oppressed and marginalized by others.

I was very impressed by this well-considered and confident definition of a theory that does not exist. The essay, while short, is very good. I attach the full question and response below.

The essay reads very well except for a few weird passages:

Rabbits compete with bandicoots for food and habitat, causing a decline in the bandicoot population. This has had a devastating impact on bandicoots, as they are now considered a vulnerable species, facing a real threat of extinction. Furthermore, the widespread destruction of their habitat has also had a significant impact on their cultural and spiritual practices, which are closely tied to the land.

I was not aware of the cultural and spiritual practices of bandicoots.

Now we all, and we, meaning you dear reader, know exactly what to do in response.

We are having fun, of course, but you can immediately sense the danger:

- Don't let schoolchildren (or physicists) use this to draft corporate marketing messages.

- Properly supervise board members before they sack all the creatives and hire robots.

This exercise is probably enough to motivate the Turing Game.

Playing the Turing Game

Since I am trained in a hard-core science called Theoretical Physics, you will understand that I am prone to experiment on anything with one simple goal in mind: create a theory for it.

I don't mean equations. I simply mean a mental model for how the thing works, how I can think abstractly about how it works, and what experiments to do to test my theories.

In the life of any budding physicist, this probably started with insects in jars, progressed to electric current, the interactions between insects, electric current, and parents, and sundry other forms of advanced learning about how to live well and effectively in this world.

The rabbit versus bandicoot essay is one I concocted on my second try in using ChatGPT as soon as I figured out that it was really good at two incredibly useful corporate skills:

- Sounding aggressively confident when you have not got a clue what you are saying.

- Making stuff up to try and guess what it is to say when you don't actually know.

Let us pause for a nanosecond.

Your laugh tells me that you know just how right this assessment truly is.

You can probably tell that I am exceptional in both departments. I once managed to squirm my way out of having failed to vote in a Federal Election with this excuse:

I did not vote on religious grounds. Since the last Census banned the Jedi Religion as a valid response to the question on "What is your Religion" I lost faith in Democracy. I then switched my religious persuasion to Sith Overlord, one of two in this household, and avow my aversion to voting as a system of governance. I now believe in Empire, and so did not vote on religious grounds.

You will appreciate that people like me should not be included in artificial intelligence training data. Obviously, I got off and did not receive a fine. Nobody in the Electoral Office wants to waste the time of a magistrate with an idiot like me, and it probably went up on the Australian Electoral Commission office fridge door.

So dear reader, we know that this bot is super fun, but actually pretty stupid.

The thing we should both worry about is that senior management may not know that. We are going to need a way to manage them through it.

Enter the Turing Game, which is my own version of the Classical Turing Test. It is different in a not very complicated way. ChatGPT can converse on a wide range of topics. The difference of intelligence is less about the range of topics than the bot's self-awareness, sense of reality, as opposed to fantasy, and basic higher-level comprehension.

The system is prone to bluffing when it really does not have a clue.

We just need a framework to compare unfiltered ChatGPT to human supervised ChatGPT. The output would be much better if the bot responded with a question:

Do you want me to make something up, or is this a serious question about something I don't actually know?

In order to figure that out, we need a human critic to review the bot's output. This is the basic thrust of the Turing Test. We humans understand, at a higher level, these aspects of meaning.

Alan Turing was a very remarkable man. Not only did he participate in the Bletchley Park code breaking exercise, the one to crack the German Enigma Code used by U-Boats, but he also played a key role in the early mathematical investigations of automated computing.

This is back in the days where there were adding machines, and folks were just beginning to speculate on electrical computers, using cogs and wheels, and electronic computers, using valves and then, later, the transistor. I always have respect for people who think ahead and construct workable mathematical models for engines that don't yet exist.

Alan Turing did that first with the Turing Machine, a conceptual model for a general-purpose computer. The American Alonzo Church made independent, and far reaching, mathematical contributions of his own, at around the same time. Together their ideas are memorialized in the Church-Turing Thesis, a proposition about what kinds of computation can be done with the type of machine they envisaged.

That is more than we need, but you get the idea.

Alan Turing was a Big Cheese. A pioneer, a thinker, and an explainer.

Thinking your way through abstractions to invent new theories of computation is one thing, but the other remarkable thing about Turing was his ability to distill complex and far-reaching questions down to their absolute essentials.

One of the early questions that came up in speculation about a possible artificial intelligence was how you would ever tell when it was actually intelligent. Turing reasoned that the question is properly a relative assessment of capability. You could not just give a simple listicle style test, in the manner of BuzzFeed, and expect to get anything meaningful back again.

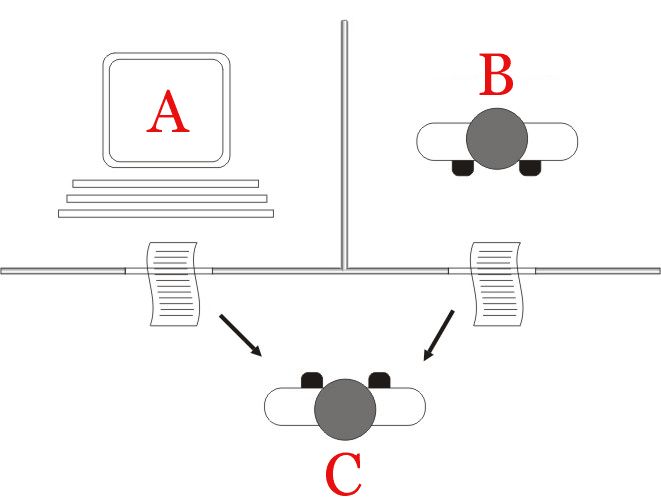

Since intelligence is a human concept, one would need a way to put the computer up versus the human and then see how they performed in comparison. He reasoned that one simple way to express the idea would be in game of successive questions he called the imitation game.

It is all as simple, as A, B, C, with some paper shuffling. These days, use email.

In the figure above, from Wikipedia, we show the basic form of the game. You have two rooms, one with the computer intelligence (A) and the other with a human (B). The idea is that another human (C) gets to ask the same question to each, and then review the answers.

On this basis, the challenge for player C is construct questions that will provide A and B the best opportunity to differentiate themselves. At the end, the player C is to decide not only which room contains which, but also which is properly intelligent in a human sense.

Until recently, there was no reason to really consider applying a Turing Test style procedure to artificial intelligence programs. In one sense, the game playing tournaments like chess, go, and common video games, showed that human capability can be bested in games of known rules. Furthermore, simple knowledge games of information retrieval, like Google search, show clear advantage falling to computers, and modern database systems.

On the face of it, a Turing Test seems irrelevant.

However, in this world, right now, many management teams will be whiteboarding the near equivalent question to a Classical Turing Test:

Do we replace this human task with ChatGPT or not?

You can see that this is an (A) versus (B) decision, just like the Turing Test.

However, if you recall that generative artificial intelligence programs are trained on data sets that are way bigger than what most people can absorb, we should refine the question:

- Does ChatGPT alone do a good enough job to be left alone unsupervised (A)? or

- Does ChatGPT need a human-in-the-loop critic to keep it on the straight and narrow (B)?

If McKinsey were involved, there would be PowerPoint, but you can guess the rest.

Under what circumstances does handing a Stabilo Boss Highlighter to the human you have left in the marketing department enhance the quality of corporate press releases?

We all know the answer to that question, even before doing the experiment. For this reason, I said that I do not lose (much) sleep over generative artificial intelligence.

However, I was a kid once, and I liked intellectual games. It is good to do well at something, and also good to meet other kids who are better than you at some things.

What would be terrible, I think, is if kids had no opportunity to learn that they plus ChatGPT are actually smarter than ChatGPT just by itself. They are going to use it, we know that. What a good educator, and pedagogue, will do is to devise games that kids can play, both with bots, and amongst each other to both learn about AI, and feel good about it.

I think that is already happening with the visual AI, like the one I used for the title picture.

However, for creative writing and critical thinking exercises, like essays, it would be better to have a structured form of Turing Game. Not boring structured, but fun structured.

Here goes with my version of the Turing Game for schools:

Room A contains The Teacher and ChatGPT. The Teacher is just a monkey who types.

Room B contains A Kid and ChatGPT. The Kid can edit the output if they want.

Room C contains all the other Kids. They invent questions (hopefully on Pop-Tarts)

That is all there is to it. Since ChatGPT is is really quite creative in using analogies to bluff its way through answering questions, the kids can pick up on that. Since they are children, they are a lot smarter than most adults at bluffing, cheating, and making stuff up.

At the end, the kids all learn that they are smarter than their teacher.

Pretty soon, if they are not handicapped in a cruel way by not having lived long enough to have actually read all of Github and 196,700 books on random things, the kid who is in the room editing the output will spot some obvious garbage and highlight it.

When quizzed about Critical Rodent Theory they might respond:

Critical Rodent Theory is not actually a thing. It is true that rabbits do compete for food with native bandicoots, but that is not actually their fault. They are both nice animals, at heart. It is humans who oppress both species by running them over on roads or burning down forests. I like fluffy bunnies. I have one as a pet. I also like bandicoots, but they are native animals. You can't have them as pets because that would be mean, and I am not a mean person.

You see, there is no reason to actually fear ChatGPT.

Kids are smart. They will figure it out for themselves very quickly.

It is managers and politicians that you should worry about.

The Last Word: Appropriate Disciplinary Actions

The behavior of the author has been judged unacceptable in relation to:

Pop-Tart Conspiracy Theory Infractions

The human resources department issued this request to ChatGPT:

Develop a staged and proportionate behavioral intervention for use by human resources to dissuade our errant corporate physicist from using ChatGPT for generating inappropriate conspiracy theories.

Here is the new corporate policy covering such matters at Jevons Global.

Staged and Proportionate Behavioral Intervention Plan

Here is a staged and proportionate behavioral intervention plan:

Verbal Warning: HR should schedule a private meeting with the corporate physicist to discuss the issue of using ChatGPT to generate inappropriate conspiracy theories. During the meeting, HR should express concerns about the negative impact on the company's reputation and explain that this behavior is not acceptable.

Written Warning: If the behavior continues, HR should issue a written warning outlining the specific incidents and the consequences of continued inappropriate behavior, including potential disciplinary action.

Performance Improvement Plan: If the behavior persists, HR should develop a performance improvement plan to address the issue. This plan could include specific steps the corporate physicist must take to stop using ChatGPT for inappropriate purposes, as well as consequences for non-compliance.

Disciplinary Action: If the behavior continues despite the performance improvement plan, HR should consider taking disciplinary action, up to and including termination of employment.

It is important for HR to be consistent, fair, and impartial in its enforcement of the intervention plan. It may also be beneficial to involve legal counsel to ensure compliance with applicable laws and regulations.

Yikes. I am done for, now. Gotta run...

Image credit: midjourney.ai with this prompt:

"Happy robot family cavorting in a field of lithium with windmill."

No changes. First image off the top of the generative stack.

Never miss an insight

Enjoy this wire? Hit the ‘like’ button to let us know. Stay up to date with my content by hitting the ‘follow’ button below and you’ll be notified every time I post a wire. Not already a Livewire member? Sign up today to get free access to investment ideas and strategies from Australia’s leading investors.

2 topics

5 stocks mentioned