Do you really need more information?

Ellerston Capital

Last week I was down at the Livewire studios for the filming of another “Buy Hold Sell” video which is their popular segment where fund managers are asked to make a call on three to five stocks that are nominated by the Livewire Team.

I did the first one with them a few months back on the last day of August 2017, after reporting season. It clearly became a source of amusement for many friends and colleagues on two levels: firstly, I seem to have taken the brief of “keep it short” too far (click here for a laugh at poor Matt Kidman trying to figure out what to do with my truncated answers).

At a secondary level, a number seemed to find it amusing that a global fund manager was offering opinions on Australian stocks that we don’t cover every day. Now it’s not like I have no experience with these stocks, I spent the first five years of my career at BT Funds Management in the Australian Equities team covering them, and we spend a lot of time covering global airlines here at Morphic (relevant for Qantas). It’s just that there is an expectation that a local “specialist” should be able to do this better.

So it was with interest that I re-visited my calls from late August this week ahead of the next Buy/Hold/Sell Segment.

Figure 1 – My Scorecard

Source: Bloomberg, Team Analysis

Well, that turned out better than I (and friends) expected! Six wins, two draws and a loss.

Which is a nice point to segue into a sub-topic that we care deeply about at Morphic and we would argue is deeply misunderstood by market observers and participants: how much information do you need to make a decision?

Keeping the “book club” theme going, I would like to recommend to readers another book that is dear to my investing heart (no it’s not Ben Graham’s Security Analysis). It’s written by that well-known investment house, the Central Intelligence Agency (CIA) of the USA.

Huh? Well if you think about it, intelligence analysis shares a lot of similarities with stock analysis: you are required to make a decision on something you have incomplete information about, using a combination of quantitative (be they satellite photos in the CIA case) and qualitative factors (CIA hearing rumors through spy networks). You have to present those forecasts and act on them.

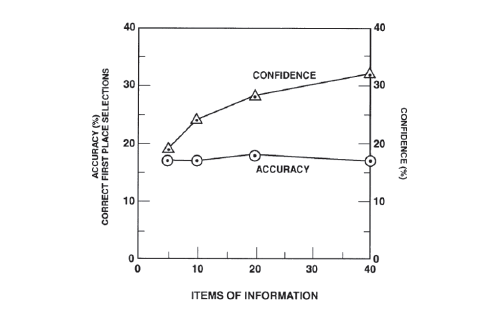

So one chapter of the book openly questions whether their analysts need more information. The natural answer is yes, more information would help. But it seems to come at a cost. They ran an experiment with handicappers where they used races where the results were already known and asked them a question: you can choose five pieces of data (such as race distance and some others) out of the forty that you normally use. Pick a winner and assign a confidence level to your pick. Then they repeat the experiment but let them choose ten pieces of data and so on, repeating the confidence question each time. The results are below and strike us as rather interesting.

Figure 2 – The confidence level seems to increase faster than the accuracy when we are given more information

Source: Psychology of Intelligence Analysis, Center for the Study of Intelligence

What’s remarkable is that accuracy barely improves (and remember these are fulltime bookies whose livelihood depends on getting the odds right) but look at their confidence soar!

I see this time and time again in stocks presentations. An analyst who researches the stock becomes more and more confident they are right, when in reality they are using a small number of data points to make their decision. They are just getting more confident in that decision.

So how does one stop this or limit it? It requires building an investment process that forces an analyst to do a certain level of work – insight and knowledge are still required – but counterintuitively, stopping them at a certain point, so they retain uncertainty in their decision which helps to keep an open mind. It also builds in a “post-mortem” function to check if scenarios are being thought of ex-ante.

Overall more time is spent on thinking about other angles or approaches than on becoming an “expert” on the subject. After all, if that was true sell-side analysts should be the best stock pickers in the industry, when in fact stock ratings have been shown to be a reverse indicator of future performance!

Let’s see how the next set of results go and whether it may have just been pure luck.

For further insights from Morphic Asset Management please visit our website

1 topic

Chad co-founded Morphic Asset Management in 2012. As a stock picker Chad is also a generalist but has strong regional knowledge of Europe and the Americas. He has also been awarded the CFA Charter.

Expertise

Chad co-founded Morphic Asset Management in 2012. As a stock picker Chad is also a generalist but has strong regional knowledge of Europe and the Americas. He has also been awarded the CFA Charter.